If you have tried older AI image tools in a real workflow, you probably know the pattern already. The first result looks impressive. The second result is kind of usable. Then you notice the text is broken, the layout is weird, or the edit changed half the image you wanted to keep. That is exactly why so many teams are suddenly paying attention to GPT Image 2 use cases.

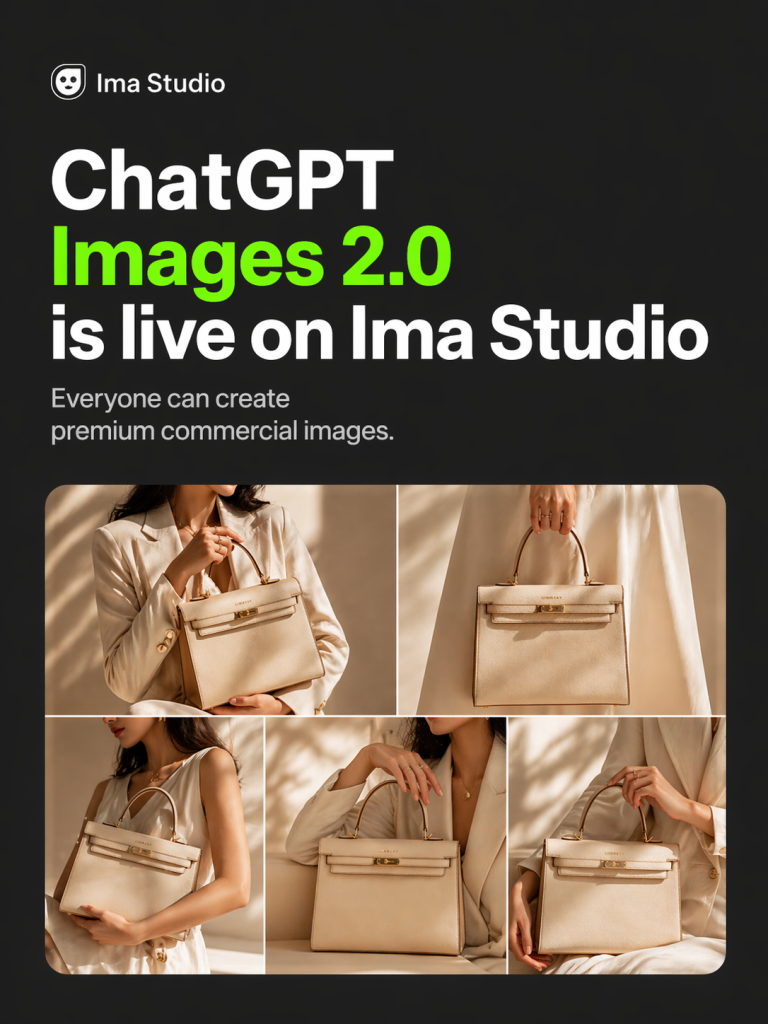

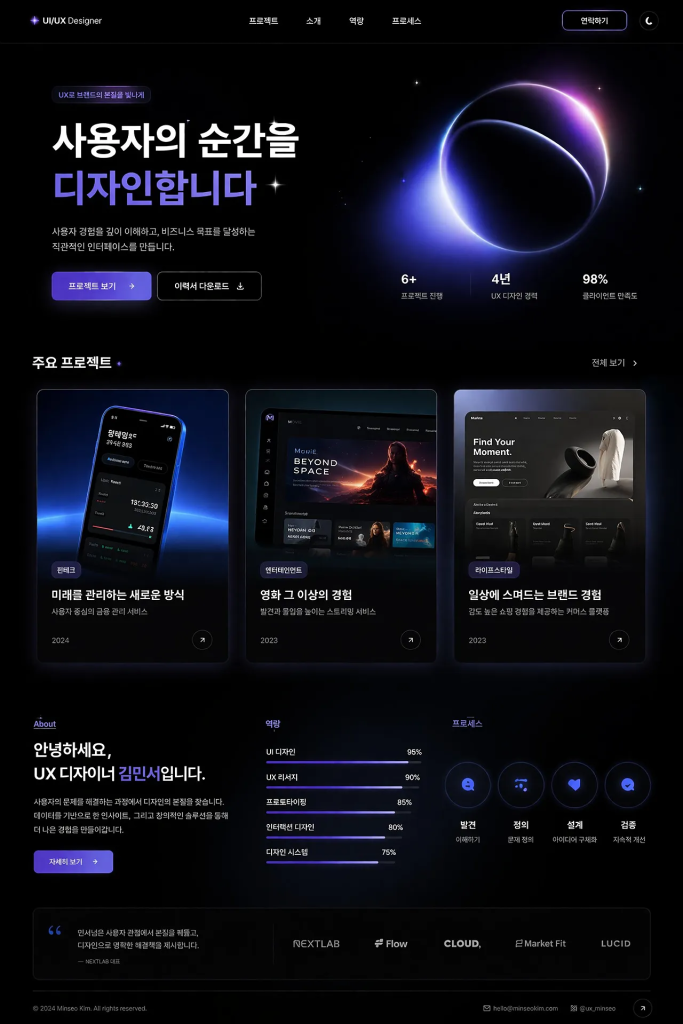

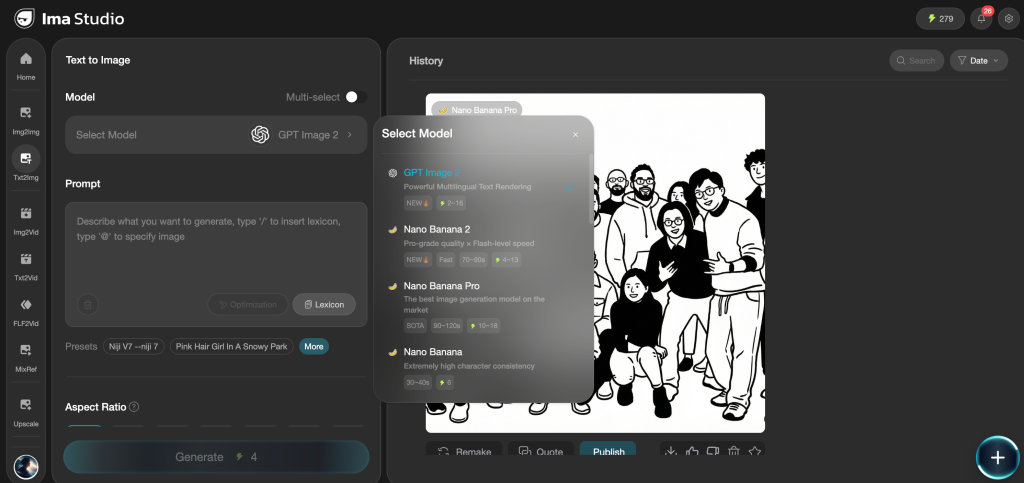

The promotional poster for Ima Studio below was generated with a single click using Image 2.

What makes GPT Image 2 interesting is not just that it can create pretty images. A lot of models can do that. The more important shift is that it is starting to work in the places where businesses actually spend time and money: ad creatives, product mockups, social graphics, infographics, UI screens, and controlled edits to existing brand assets.

In plain English, GPT Image 2 feels less like a toy for inspiration and more like a practical production assistant. It is better at text-in-image tasks, stronger at following detailed instructions, and more reliable when you need an image to match a business purpose instead of just looking cool.

So in this article, I am not going to list random prompts. I am going to walk through the best GPT Image 2 use cases that actually make sense for marketers, ecommerce teams, designers, content teams, and product people. I will also include concrete examples, so each section feels less theoretical and more like something a real team could try this week.

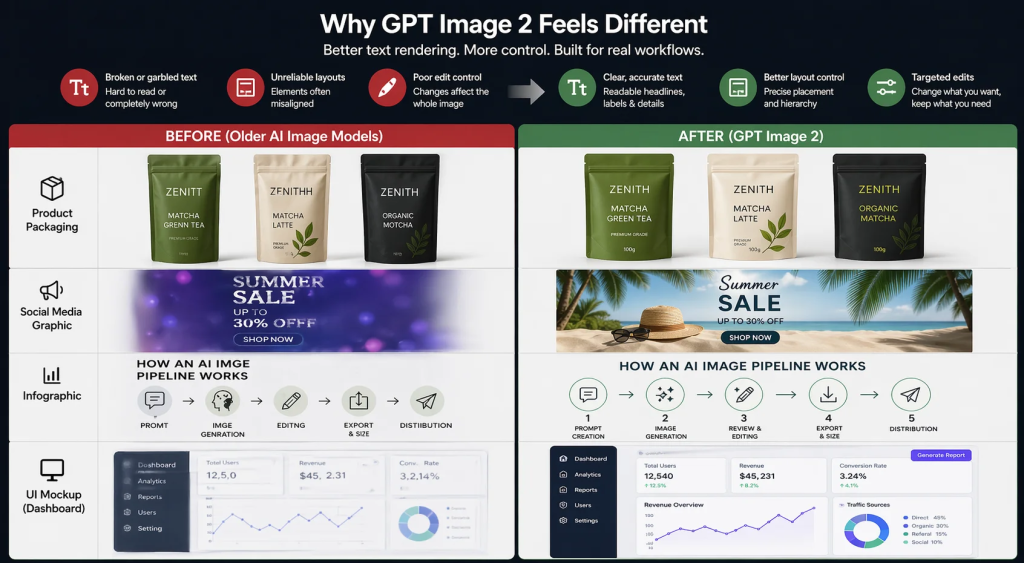

Why GPT Image 2 feels different from older image models

The biggest reason is simple: text. For a long time, AI image tools were fine if you wanted fantasy art, moodboards, or rough visual ideas. But the moment you asked for a product package with readable copy, a social graphic with a real headline, or an infographic with labels that had to be correct, things fell apart fast.

GPT Image 2 is not perfect, but it is clearly better in the areas that matter for production work. Based on OpenAI’s own prompting guidance and broader market commentary, the model is especially strong for text-heavy images, photorealism, brand-sensitive edits, infographics, UI mockups, and high-precision creative workflows. That is a big deal because those are exactly the tasks where previous models created the most frustration.

There is also a second reason this model matters: it is easier to control. You can be more specific about what you want. For example, instead of saying “make a product ad,” you can describe the platform, the layout, the CTA placement, the lighting, the mood, the brand colors, and even which parts of the original image should stay untouched. The more your workflow depends on precision, the more that control matters.

One helpful way to think about it is this: older models were often best for inspiration, while GPT Image 2 is much better for workflow completion. That is why the best GPT Image 2 use cases are usually tied to repeatable team tasks, not one-off experiments.

1. Product packaging mockups

Packaging is one of the clearest examples of where GPT Image 2 can save time. In a normal workflow, if a team wants to test three packaging directions for a new drink, supplement, or skincare product, it usually needs a designer to lay out each option, mock it up on a 3D box or bottle, and then revise the copy once feedback comes in. That is expensive and slow, especially if the product is still in concept stage.

With GPT Image 2, the process gets much faster. You can describe the packaging type, brand style, colors, tone, and key text elements, then ask for a photorealistic mockup. The real unlock here is that the label text has a much better chance of coming out readable and properly placed than with older models.

Example scenario: imagine a startup validating a new matcha product. Instead of waiting on a full design round, the founder can ask for three pouch designs: one minimal, one premium-lifestyle, and one bold DTC style. Each version can include the product name, a short benefit line, and a weight label. That is good enough for internal review, investor decks, or even early landing page testing.

Why this use case works: packaging sits right at the intersection of branding, product visualization, and text accuracy. If the text is wrong, the mockup is useless. If the lighting and materials look fake, the concept feels weak. GPT Image 2 is strong enough in both areas to make this a genuinely useful workflow.

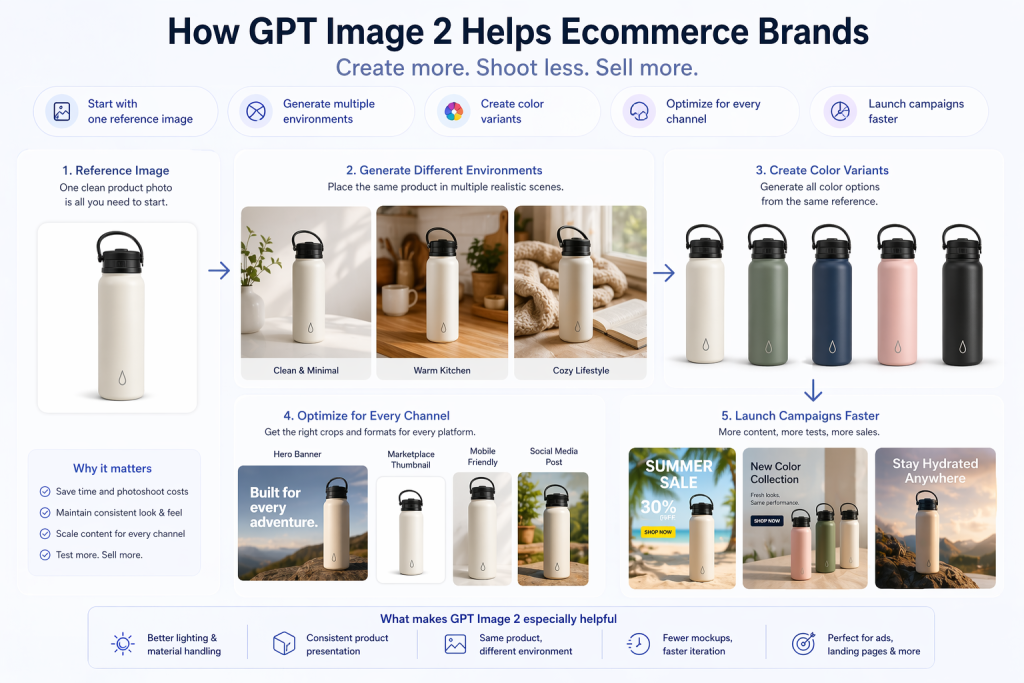

2. Ecommerce product photography and product variants

If you run ecommerce, you already know how expensive images become at scale. It is not just the hero shot. You need clean product-on-white photos, seasonal visuals, lifestyle scenes, mobile-friendly crops, marketplace thumbnails, and sometimes multiple colorways of the same product. A studio shoot for every combination gets expensive very quickly.

This is one of the strongest GPT Image 2 use cases for ecommerce. The model can help teams start from a reference image and then generate new visual contexts without reshooting everything. That means you can test a ceramic mug on a clean white background, on a warm wooden kitchen counter, and in a cozy lifestyle setup without organizing three different photo shoots.

Example scenario: imagine a Shopify store with five colors of the same water bottle. Instead of shooting all five in multiple environments, the team can use one strong reference image and then generate variant imagery for different landing pages, ad sets, or seasonal campaigns. That is especially helpful when you need a fast turnaround for promotions.

What makes GPT Image 2 especially helpful here: it handles lighting, materials, and product presentation more reliably than many older tools, and it is also useful for “same product, different environment” workflows. In practice, that means fewer manual mockups and faster experimentation.

3. Localized marketing creatives for different markets

This is one of the most underappreciated use cases. A lot of teams do not struggle because they cannot make one good ad. They struggle because they need to make twenty slightly different versions of the same ad for different languages, regions, channels, and formats.

That is where GPT Image 2 gets very interesting. Microsoft’s Foundry team highlighted this kind of scenario directly: a small design team running a global campaign often has the creative vision, but not the resources to reshoot, resize, relayout, and localize everything for each market at the same time. GPT Image 2 makes that much more realistic.

Example scenario: a global SaaS company wants to run the same promo in the US, Brazil, Japan, and Germany. The core offer stays the same, but the text, visual emphasis, and sometimes even the cultural context need to shift. Instead of rebuilding each asset from scratch, the team can generate localized versions that preserve the campaign feel while adapting copy and layout.

Why this matters: localization usually creates hidden design debt. Every extra region multiplies workload. If GPT Image 2 can reduce that cost, even partially, it becomes valuable very quickly.

4. UGC-style ad creatives for paid social

Performance marketers are always looking for more testing volume. The problem is that producing new creatives fast enough is hard. Polished studio ads do not always win, and real creator content takes time to source, brief, and edit. So teams often get stuck in a middle ground where they know they need more creative variation, but they cannot produce it fast enough.

This is why UGC-style imagery is one of the best GPT Image 2 use cases. The model can generate casual-looking product-in-hand shots, native-looking social visuals, testimonial-style statics, and other ad concepts that feel closer to what users already see in-feed.

Example scenario: a DTC skincare brand wants to test three angles: before/after improvement, “routine shelfie” lifestyle imagery, and a user-review-style ad with text overlays. Even before producing full video content, the team can use GPT Image 2 to generate multiple static concepts that help identify which angle is worth pushing harder.

Important note: this does not replace real creators or authentic customer content. But it is excellent for rapid concept development, creative direction, and early-stage testing.

5. Social graphics that actually have readable text

This sounds simple, but it is a real pain point. Social teams produce quote cards, launch images, carousel covers, event promos, stat graphics, and community updates constantly. These are not complex assets, but they depend on one thing: the text has to be right.

With older image models, you could sometimes get something visually nice, then still end up opening Canva or Figma to rebuild the copy placement manually. GPT Image 2 is much better for these situations because text rendering is one of its headline strengths.

Example scenario: your team needs a fast social card that says “10 Best GPT Image 2 Use Cases” with a subtitle like “Marketing, Ecommerce, and Design.” With GPT Image 2, you have a much better chance of getting a usable first draft, especially if you clearly specify the headline, hierarchy, alignment, and color palette.

Extra tip from OpenAI’s prompting guide: when the exact wording matters, put the literal text in quotes and be explicit about placement and typography. That makes a real difference for CTA buttons, headlines, or small labels.

6. Infographics and data visualizations

This is one of my favorite categories because it used to be one of the hardest for AI image generation. Infographics need structure. They need labels. They need hierarchy. They need the image to communicate information, not just style. So even when an older model created something visually attractive, it often failed at the actual job.

GPT Image 2 is meaningfully better here. OpenAI’s own cookbook specifically highlights infographics, diagrams, and structured visuals as strong use cases. It even includes example prompts for things like a detailed infographic explaining how an automatic coffee machine works, with labeled components and a clear technical flow.

Example scenario: imagine a content marketer writing about “How an AI image pipeline works.” Instead of briefing a designer for a one-off explainer graphic, they can prompt GPT Image 2 for a clean vertical infographic with labeled stages like prompt creation, generation, review, editing, export, and distribution. Is it always publication-perfect on the first try? No. But it can get surprisingly close, and more importantly, it gives you a strong draft very fast.

Where this is most useful: blog visuals, LinkedIn explainers, newsletter charts, training decks, and internal documentation. Basically, any place where the goal is “make this easier to understand” rather than “win an art award.”

7. UI mockups, landing page concepts, and prototype screens

Sometimes a team does not need a finished design. It just needs a believable visual to react to. That could be a dashboard concept, a landing page hero section, a mobile onboarding screen, or a rough product visualization for a pitch deck. In these moments, speed matters more than pixel perfection.

GPT Image 2 is a strong fit for this kind of work because it can produce UI-like layouts with readable buttons, cards, labels, and interface text. That means you can go from an idea in your head to something reviewable in minutes.

Example scenario: a founder wants to show investors what an AI analytics dashboard might look like, including a chart area, a left-hand nav, and a “Generate Report” button. That does not need a full product design sprint yet. GPT Image 2 can generate a visual concept quickly, which is often enough to move the conversation forward.

Why this use case matters: a lot of product work happens before design resources are available. Having a tool that can bridge that gap is useful for PMs, founders, growth teams, and agencies.

8. Blog headers, YouTube thumbnails, and editorial visuals

Content teams need a steady stream of visuals, and they usually need them fast. Blog headers, newsletter hero images, article illustrations, Open Graph images, and YouTube thumbnails all live in that “important but repetitive” category. They matter a lot for click-through and presentation, but they are also expensive to produce manually at scale.

This is another place where GPT Image 2 fits naturally. It is especially useful when the image needs a headline, strong visual contrast, or an editorial feel that matches the content topic.

Example scenario: a YouTube creator wants a thumbnail for “Why I Stopped Using Midjourney for Product Ads.” The typical recipe is clear: emotional framing, readable title text, a strong central object, and a background that supports the story without being cluttered. GPT Image 2 can help create that direction quickly, especially when the prompt specifies layout and text hierarchy.

Where it helps most: content teams shipping weekly articles, creators running multi-channel publishing systems, and SEO teams that want stronger page presentation without waiting on design every time.

9. Training materials and educational content

Education is a surprisingly strong category for GPT Image 2 because learning materials often combine illustration, labels, sequencing, and clarity. That is exactly the kind of job where better text rendering and layout control make a big difference.

OpenAI’s guidance and Microsoft’s enterprise positioning both point toward this category. If you are building course materials, internal onboarding decks, visual SOPs, or explainer content, the model can help generate diagrams, process maps, labeled illustrations, and concept visuals that are much closer to usable than older tools managed.

Example scenario: an internal enablement team needs a graphic explaining the customer onboarding workflow: lead capture, qualification, demo, setup, activation, and retention. Instead of creating a plain slide with arrows and boxes, they can generate a more polished visual aid that feels closer to a designed explainer.

Why it works: educational visuals are less about artistic originality and more about clarity. That plays directly into GPT Image 2’s strengths.

10. High-precision edits to existing brand assets

Not every workflow starts from a blank canvas. In fact, a lot of the most valuable work in creative teams is just controlled editing: change the background, update the CTA, remove one object, localize the text, preserve the subject, keep the brand colors, do not break the composition. That kind of task is easy to describe and annoyingly time-consuming to do repeatedly.

This is where GPT Image 2 becomes especially practical. OpenAI’s guidance puts real emphasis on preserving layout and identity during edits, and Microsoft’s example of iteratively changing ad content in a subway mockup is a good illustration of how useful that can be in practice.

Example scenario: a marketing team has one solid campaign visual but needs five versions: a spring version, a summer version, a German-language version, a version with the badge moved, and a version with the background simplified for mobile. Instead of rebuilding all five manually, the team can use image editing prompts that say, essentially, “change only this, keep everything else the same.”

That is a huge shift. It means AI image generation is no longer just for net-new imagery. It can also become part of regular creative operations.

So, when should you actually choose GPT Image 2?

If your workflow depends on readable text, high-quality product visuals, UI-like layouts, or precise edits, GPT Image 2 is usually a very strong choice. OpenAI’s own recommendation is to use it as the default for new builds where image quality, editing reliability, and production value matter more than absolute lowest cost.

That said, not every use case needs the same level of fidelity. For low-stakes drafts or high-volume experimentation, cheaper or lower-quality settings may still be enough. But if you are creating customer-facing assets or trying to reduce rework, the better first-pass quality of GPT Image 2 can easily pay for itself.

Best practices if you want better results

Before wrapping up, here are a few practical habits that consistently help:

- Name the asset type clearly. Say whether it is a product mockup, infographic, UI screen, blog header, or banner ad.

- Put exact wording in quotes. This is especially useful for headlines, CTA buttons, labels, and package text.

- Describe what must stay unchanged. For edits, be explicit: keep layout, identity, angle, lighting, brand elements, and typography if needed.

- Use layout language. Mention placement, negative space, framing, and hierarchy.

- Do not overload the first prompt. Start with a strong base prompt, then refine with small follow-up edits.

That last point matters a lot. In real workflows, the best results usually come from iteration, not one giant “perfect prompt.”

Final thoughts

The reason people are excited about GPT Image 2 is not that it suddenly replaces designers or photographers. That framing is too simplistic. The more realistic story is that it reduces bottlenecks in workflows that already exist. It helps teams move from idea to usable draft faster. It helps marketers test more concepts. It helps ecommerce teams produce more variants. It helps content teams ship more visuals. And it helps product teams communicate ideas before the full design process begins.

That is why the best GPT Image 2 use cases are not random art experiments. They are repetitive, practical, business-shaped tasks where control and readability matter.

References

- OpenAI Cookbook: GPT Image Generation Models Prompting Guide

- OpenAI Cookbook: Image Evals for Image Generation and Editing Use Cases

- Microsoft Foundry: Introducing OpenAI’s GPT-image-2

- MindStudio: GPT Image 2 Use Cases