Everyone can be a director now.

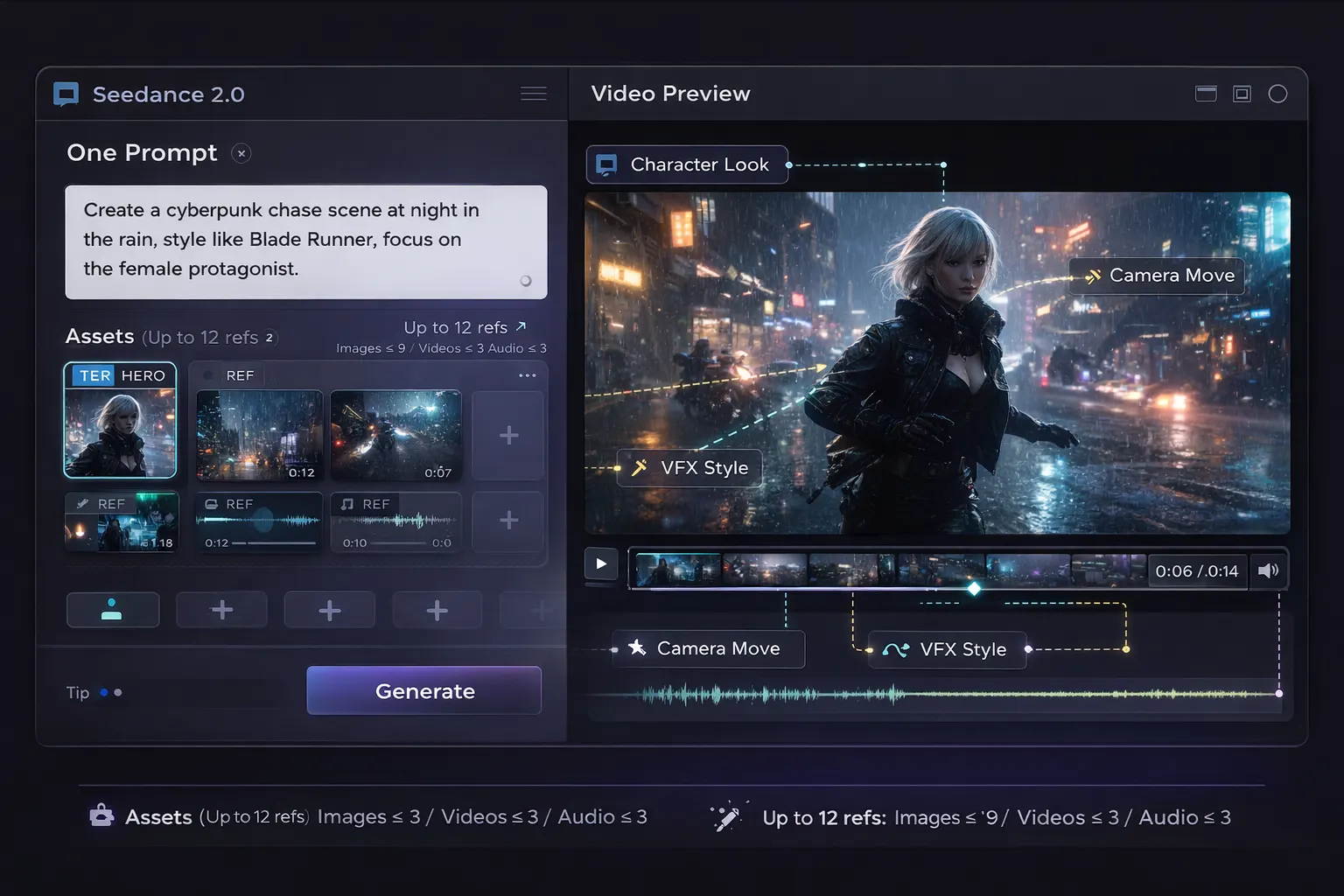

Seedance 2.0: A multimodal AI video generator built for control, turns your prompt into a coherent sequence—shots, sound, sync, and style locked in.

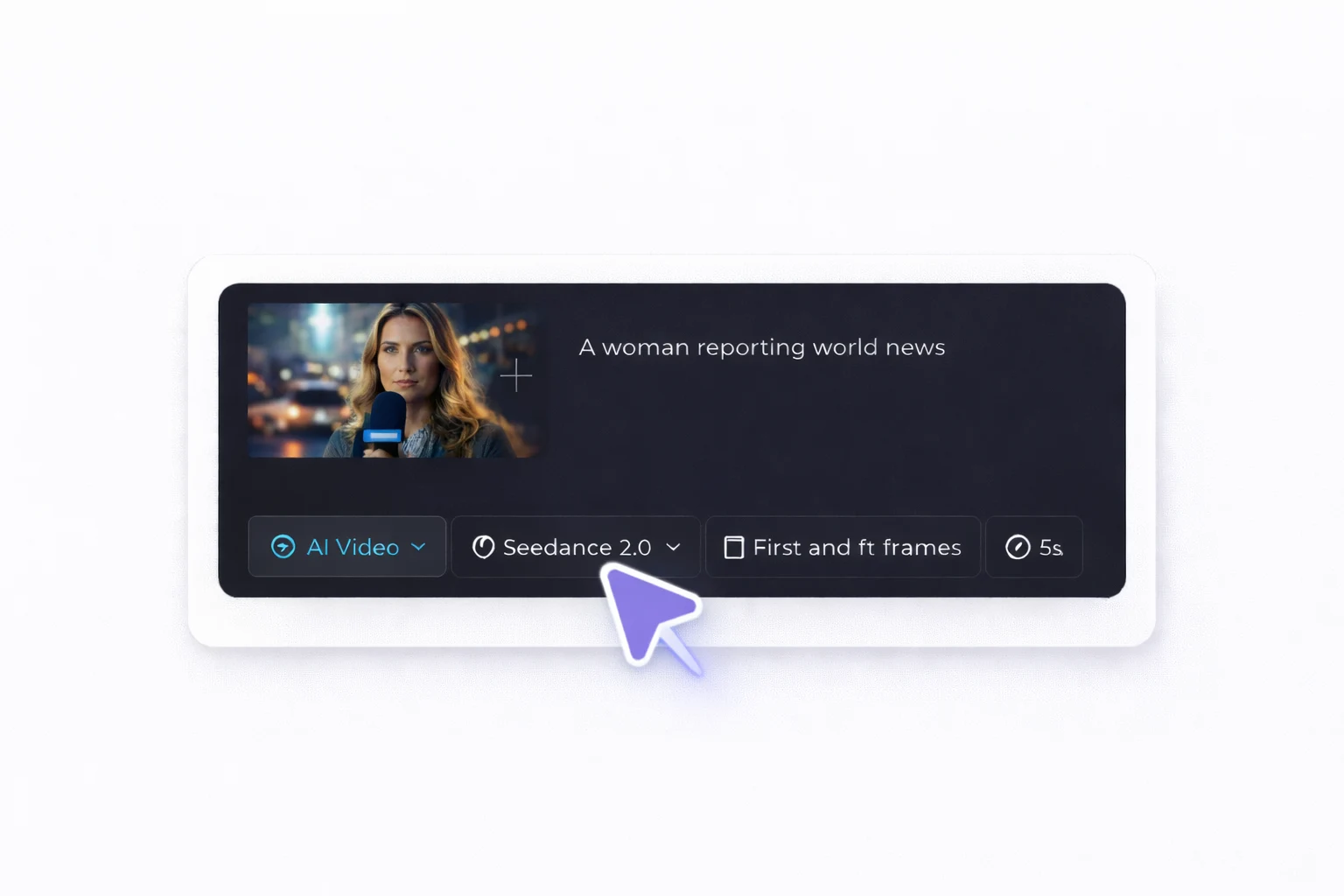

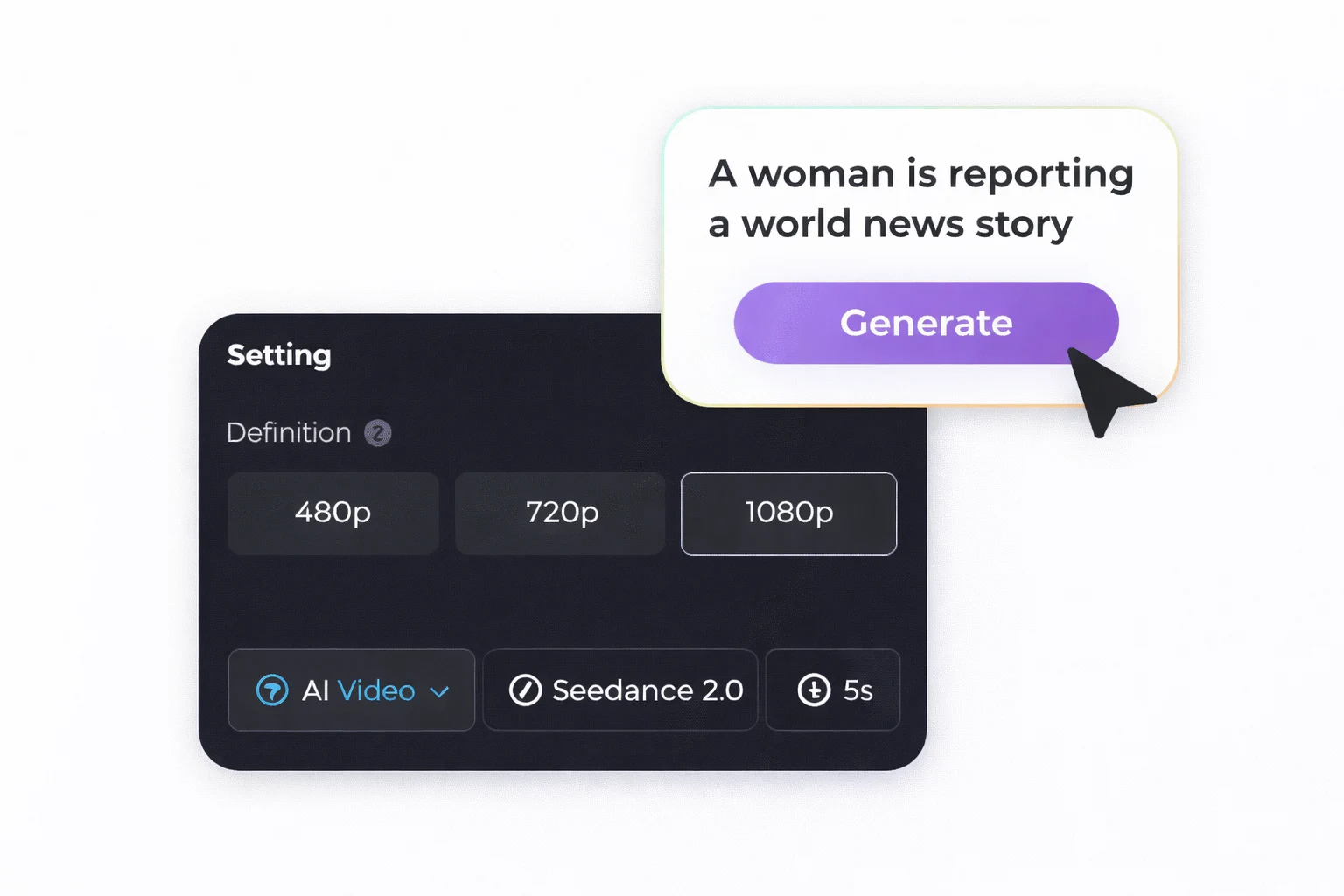

Open Ima Studio’s AI Video Generator and choose Seedance 2.0 as your model.

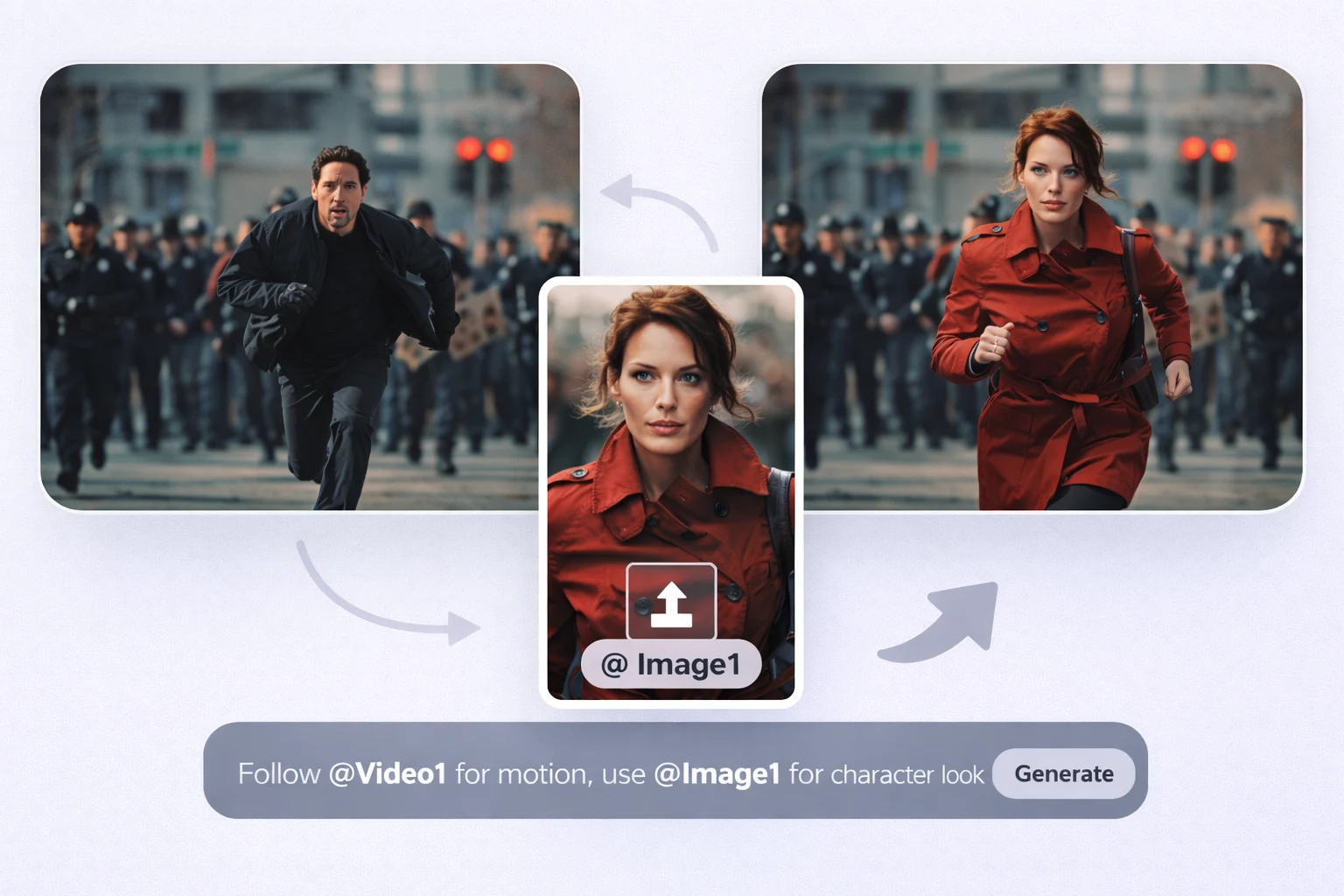

Add text, images, video clips, and/or audio. For more control, you can mix up to 12 reference files (up to 9 images, 3 videos, and 3 audio).

Click Generate, review the result, then adjust the prompt or swap references to lock in the exact motion, camera style, and overall look.

Seedance 2.0’s strength isn’t just that it can generate—it’s that it’s more likely to produce a usable result on the first try. The same idea comes out more stable and closer to what you expected, which reduces trial-and-error time and cost.

You can “direct” the output with more precise language—pacing, emotion, performance intensity, and cinematic atmosphere. The result feels like you’re in control of the piece, instead of the model pulling you off track.

The visuals look cleaner and easier to read: the subject stands out, and small elements—like logos, textures, and edges—are easier to keep crisp. Overall, the output feels closer to a finished clip you can publish right away.

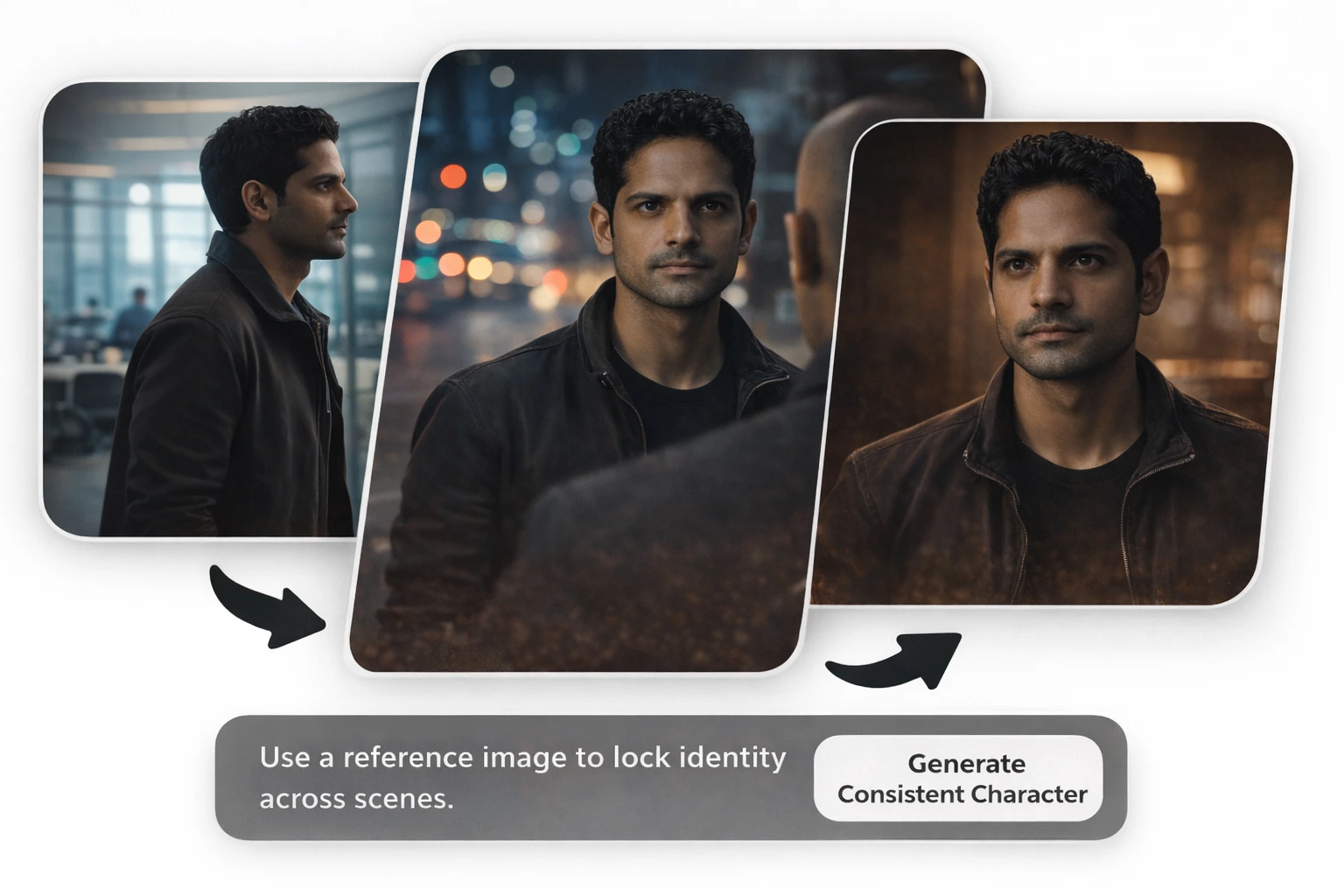

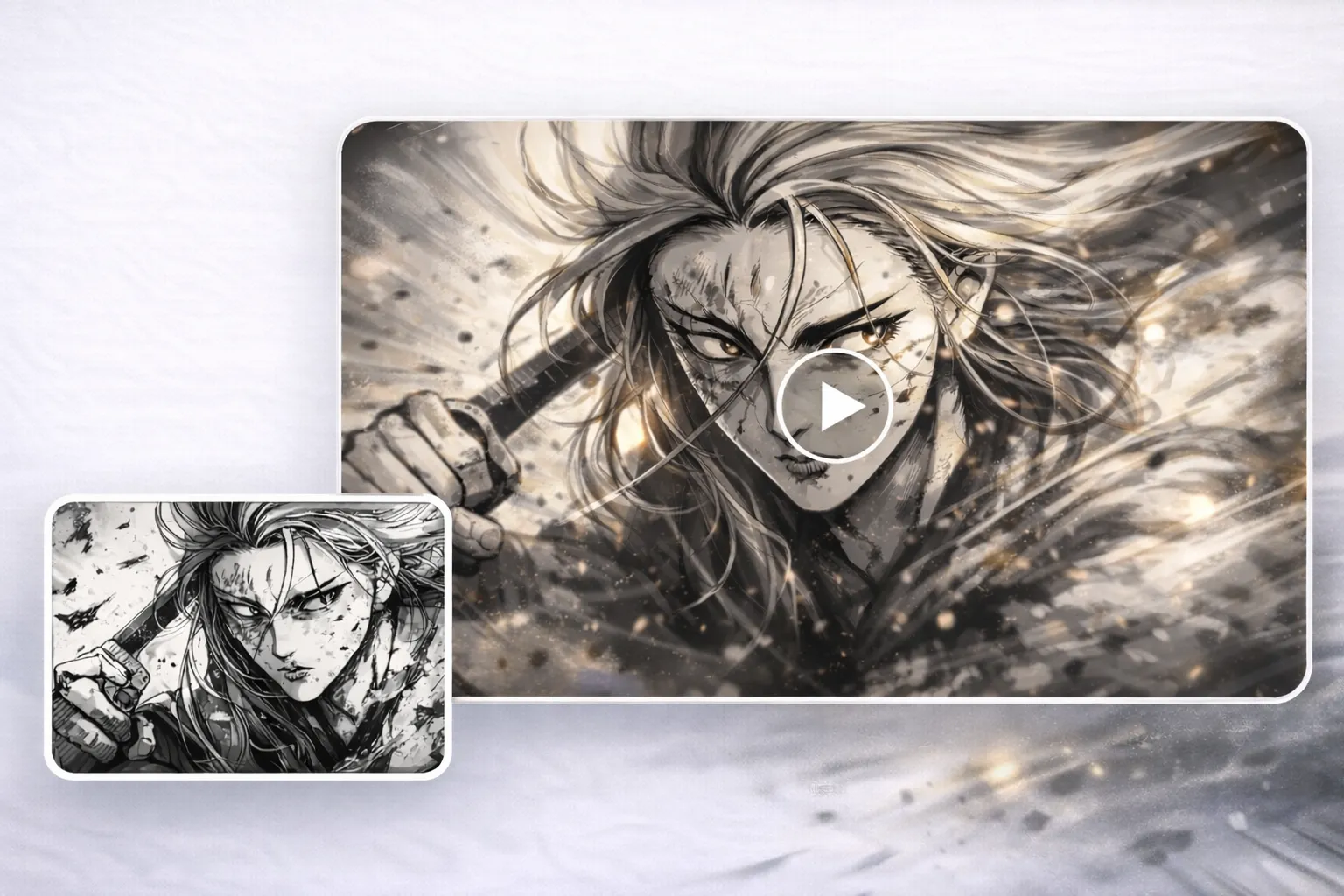

When you want a specific aesthetic, Seedance 2.0 is better at holding the style boundary. It’s less likely to drift into a different visual language, which makes it a strong fit for series content and brand visuals.

One concept can quickly expand into multiple variations—different hooks, pacing, shot emphasis, or vibes. It’s especially useful for social iteration, ad A/B testing, and batch production.

From talking clips and manga-style motion to product showcases and mood-driven shorts, Seedance 2.0 handles a broader range of formats. It’s a good way to unify different content styles under one consistent creation workflow.

Seedance 2.0 is a multimodal AI video generator that turns text prompts plus reference assets into short, directed video clips. Instead of only “generating visuals,” it’s designed to follow creative intent—style, pacing, and structure—more like a guided creation workflow.

Seedance 2.0 supports text, images, video clips, and audio as inputs. You can mix these inputs in one generation to guide what the model creates and how it behaves (look, motion, and sound).

You can use up to 12 assets per generation—with a cap of 9 images, 3 videos, and 3 audio clips (and video/audio length limits may apply, depending on the interface).

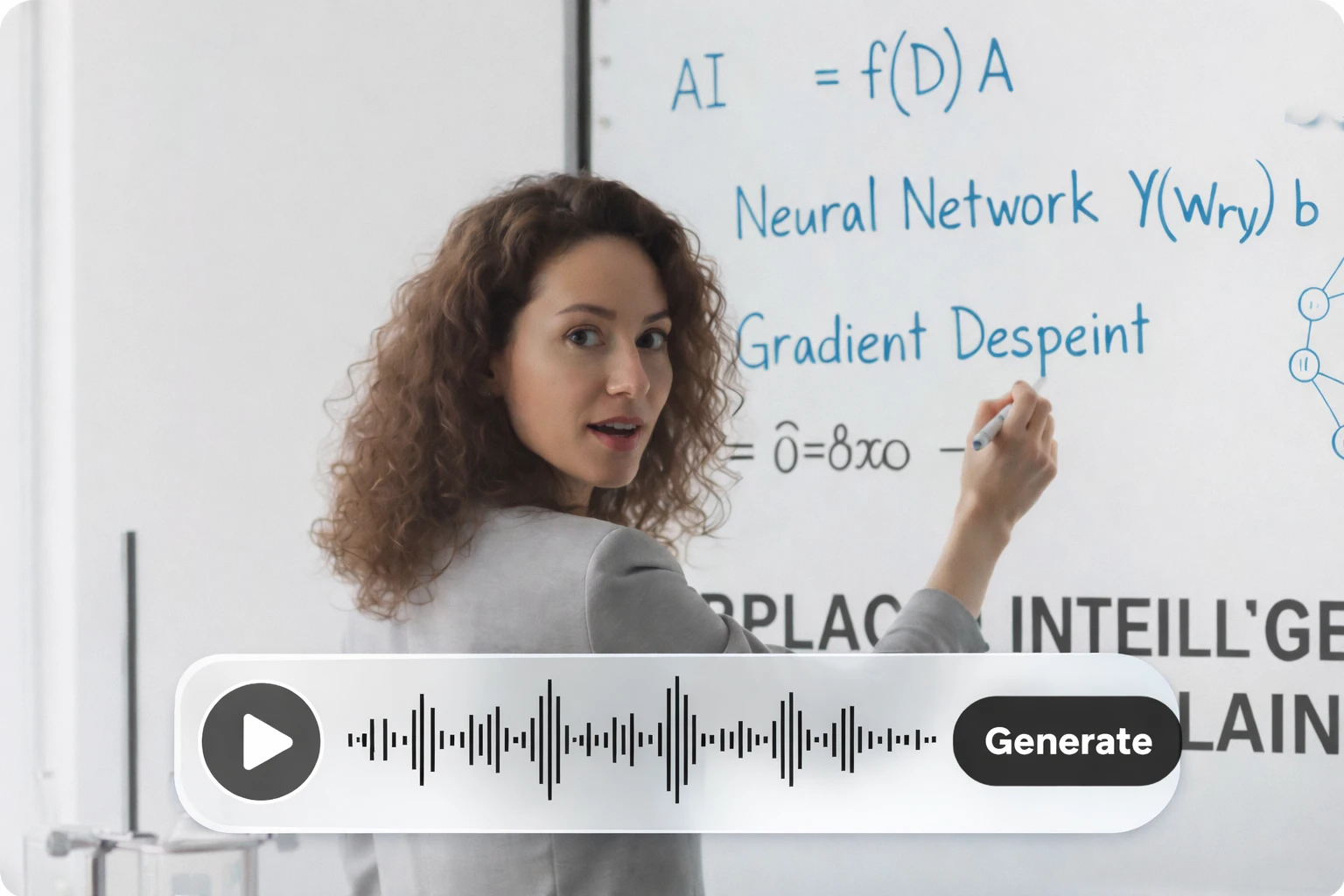

Yes—Seedance 2.0 is described as supporting built-in audio generation, including context-aware sound effects and background music. It can also sync video timing to uploaded audio or music beats for rhythm-driven edits.

You can upload audio as a reference track and generate visuals that align to timing cues—useful for beat-matched edits, music-video pacing, and audio-driven motion. When you want tight sync, keep the audio clean and specify what should land “on the beat.”

Yes—on Ima Studio, you can start with 200 free credits, which makes it easy to test Seedance 2.0 before spending anything. Credits are used per generation (the exact cost can vary by settings like duration/quality). If you’re new, the fastest way to “feel the model” is to run a few short clips first.

Yes—Seedance 2.0 supports text to video AI, image to video AI generator workflows, and video to video AI remixing. The best choice depends on what you want: start from scratch (text), animate a still (image), or remake/transform an existing clip (video). On Ima Studio, you can keep your workflow in one place and iterate fast using your credits.

Yes—Seedance 2.0 supports AI video extension so you can continue a video from the last frame. It’s useful for finishing an action, adding a reaction beat, or smoothing an ending. For best results, describe what happens next in 1–3 concrete actions and list what must stay unchanged (character, outfit, setting).

That’s the idea behind local AI video edit workflows: change one element while keeping the rest consistent. On Ima Studio, your prompt should explicitly say what to change (“replace the logo”) and what to preserve (“keep camera, timing, background unchanged”). The more you constrain the edit, the more seamless it feels.