If you’re searching for the best image to video AI, the honest answer is: it depends on what you need—photorealism, stylization, face fidelity, speed, or cost. This guide distills real, side‑by‑side tests we ran in Ima Studio’s Arena and community workflows, then maps those results to clear recommendations and a practical, no‑code workflow you can use today.

Quick picks: the best image to video AI by scenario

- Best for cinematic realism and strong motion: Kling or Veo 3 (access-dependent)

- Best for face/style adherence with a reference: Vidu Q2 (Reference)

- Best fast and creator-friendly option: Pika

- Best for stylized/anime looks: Seedance

- Best for quick, simple image-to-video drafts: Hailuo

- Most advanced physics-like world modeling (limited access): Sora 2

- Popular generalist with strong motion: Luma Dream Machine

Tip: You can run a one-click, same-prompt model showdown in Ima Arena. If you don’t choose a model, Ima Studio auto-selects an optimal one for your prompt and desired outcome.

How we evaluated “best image to video AI”

We used consistent prompts, the same input images, and equal length settings, then compared outputs via blind voting in Ima Arena. We focused on:

- Visual realism and scene fidelity: Does the video match the source image’s look and lighting? See our analysis: Visual Realism and Scene Fidelity.

- Subject identity and face consistency: Does the person/character stay on-model frame-to-frame?

- Temporal stability and motion quality: Are there flickers, distortions, or artifacting during movement?

- Prompt adherence and controllability: Motion prompts, camera paths, reference/pose controls, masks.

- Speed, length, and cost: Generation time, free tiers, paywalls, watermarks.

- Editing and retouch workflow: Can you fix hands, faces, and text quickly post-generation?

- Usage and rights: Export rights and attribution requirements.

Note on metrics: research metrics like Fréchet Video Distance (FVD) and LPIPS can approximate quality, but human preference often diverges—hence our Arena blind-vote approach.

- FVD reference: Unterthiner et al., “FVD: A New Metric for Video Generation,” arXiv:1812.01717

- LPIPS reference: Zhang et al., “The Unreasonable Effectiveness of Deep Features as a Perceptual Metric,” CVPR 2018

Best image to video AI: model comparison

| Model | Core strengths | Best for | Typical limits | Where to try |

|---|---|---|---|---|

| Kling | Strong realism, dynamic motion, good camera moves | Cinematic promos, lifestyle realism | Access and length may vary; regional availability | Ima Studio: Kling |

| Vidu Q2 (Reference) | High adherence to a reference image; stable faces | Face consistency, brand/style continuity | Availability depends on region/account | Vidu Q2 guide |

| Pika | Fast iterations; friendly UI; strong stylization options | Creators prototyping, social content, quick drafts | Shorter clips; occasional flicker on complex motion | Ima Studio: Pika |

| Seedance | Vivid anime/stylized looks; fun character motion | Anime, stylized shorts, motion experiments | Less photorealistic; text legibility varies | Ima Studio: Seedance |

| Hailuo | Quick image-to-video drafts; simple motion | Lightweight mockups, storyboard beats | Advanced controls may be limited | Ima Studio: Hailuo |

| Veo 3 | High-end visual quality; cinematic feel | Premium ad-style visuals | Limited access; usage terms apply | Ima Studio: Veo 3 |

| Sora 2 | Advanced scene/world dynamics; physics-like consistency | Complex scenes; long-horizon motion (access-limited) | Invite-only for many users | Ima Studio: Sora 2 |

| Luma Dream Machine | Strong motion and generalization; widely used | General image-to-video creation | Credits/limits depend on plan | Luma (external) |

Note: Model capabilities, limits, and access can change rapidly. For the most current results, run your exact prompt across multiple models in the Ima Arena and review community templates in the Ima Studio Community.

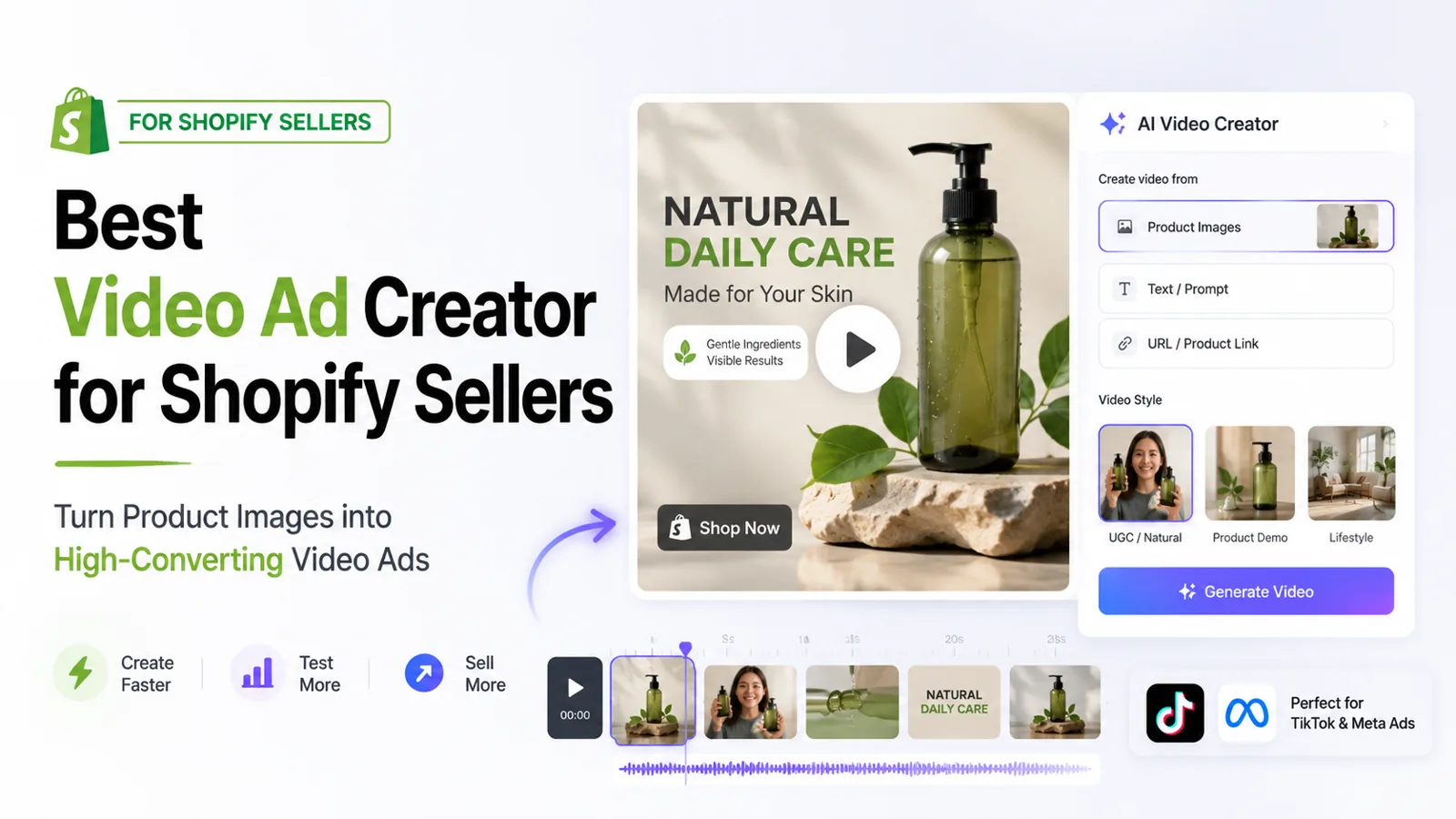

A smarter workflow: generate, compare, and retouch in one place

- Start with a strong image. If needed, enhance quality or remove watermarks first:

- Open Ima Studio and upload your image. Choose a generator (e.g., Kling, Pika, Seedance, Veo 3, Sora 2).

- Write a motion prompt. Be explicit about camera moves, mood, and duration. Example: “Slow dolly-in, soft golden-hour light, subtle wind in hair, 5–6 seconds.”

- Set controls if available: motion strength, camera path, reference mode (e.g., Vidu Q2 Reference), face protection, or masks.

- Run an Arena matchup: send the same prompt to multiple models using Ima Arena and pick your favorite output.

- Retouch in one flow. Use our unified generate+retouch workflow (see Testing Google Nano Banana: Unified AI Generate & Retouch Workflow) to fix hands, faces, text, or to upscale.

- Export and iterate. For advanced looks, try templates:

If you don’t want to pick a model, Ima Studio intelligently chooses one based on your prompt and the crowd-voted performance signals in Arena.

How to choose the best image to video AI for your use case

- Photoreal human subjects: prioritize face/identity consistency and gentle motion. Try Kling or Vidu Q2 Reference.

- Stylized content or anime: choose stronger stylization controls. Try Seedance or Pika.

- Fast iterations on a budget: test Pika and Hailuo first.

- Premium, cinematic shots: consider Veo 3 or region-permitted access to Kling. For Sora access tips: How to Get a Sora 2 Invite Code.

- Longer sequences or complex physics: when accessible, explore Sora 2.

For a broader market overview and our lab-tested notes across generators, see Best AI Video Generator 2025: Real Tests in Ima Studio.

Quality troubleshooting and pro tips

- Reduce motion complexity to stabilize faces; increase light consistency and avoid extreme camera moves on first pass.

- Use reference/identity modes where available (e.g., Vidu Q2 Reference) and keep hair/background similar to the input image.

- Fix artifacts post‑gen: inpainting hands, refining eyes/teeth, and stabilizing edges via our retouch workflow: Nano Banana guide.

- If outputs look “AI-ish,” this explainer helps diagnose causes and fixes: Why Are AI Videos So Bad?

Leverage community templates and voting

Thousands of creators share image-to-video presets in the Ima Studio Community. One-click run a template, then swap your image to reproduce the look. To validate your choice, launch an Arena matchup—blind votes quickly reveal the best model for your exact prompt.

FAQs about the best image to video AI

Is there a free option?

Yes—many tools offer free tiers or trials. In Ima Studio, you can test models like Pika or Hailuo quickly, then upgrade if you need longer clips or watermark-free exports.

Which model is best for faces?

For identity adherence, use reference modes when available (see Vidu Q2 Reference). Keep motion moderate and lighting close to your source image.

How long can my videos be?

It varies by model and plan. Premium models (e.g., Veo 3) may allow longer clips. For the latest limits, run tests in Ima Studio and check per-model settings.

Do I own the outputs?

Usage rights depend on the model and plan. Review each model’s terms (Ima exposes these per tool) and see our site policies: Terms and Privacy Policy.

Further reading and sources

- Market roundups and community opinions:

- Ima Studio guides and tests:

The best image to video AI depends on your goal: realism (Kling, Veo 3), reference/face fidelity (Vidu Q2), speed (Pika, Hailuo), or stylization (Seedance). Because model quality changes fast, the safest path is to A/B your exact prompt in the Ima Arena, then finalize with our retouch pipeline. Start now in Ima Studio—upload an image, compare models, and ship a polished video in minutes.